Using Nash Bargaining and LLMs to Systematize Fairness

Computing fair agreements from natural-language preferences

April 20, 2026 by Ian Clarke

When my wife and I hired a mediator for our prenup in 2018, I expected a systematic process: gather each person’s concerns, weigh the tradeoffs, propose something fair. Our mediator was great at the conversation work, helping us articulate what mattered. But synthesizing those preferences into a concrete agreement was left to us.

That experience got me thinking about negotiation more broadly. Without a systematic process, the outcome tends to favor whoever is more assertive or better at strategic bluffing. Research shows that agreeable people consistently earn less over their lifetimes. Not because they’re less competent, but because they’re disadvantaged by systems that reward playing hardball.

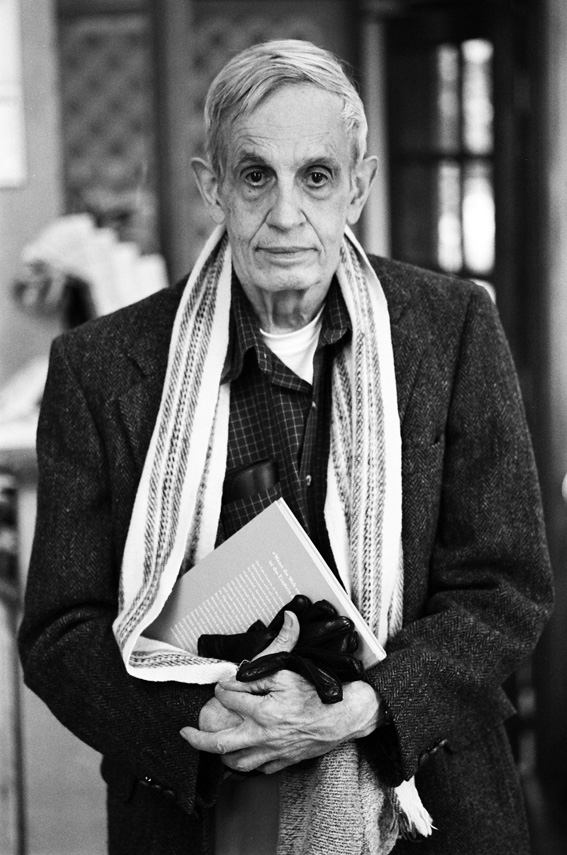

I’d read the usual negotiation books, but the ah-ha moment was discovering John Nash’s 1950 bargaining solution.

Nash, best known from A Beautiful Mind, proposed a rigorous method for resolving conflicts of interest between two parties. His solution assumes each participant has a utility function: a way of scoring how much they value different outcomes. It then identifies the agreement that maximizes the product of their gains over what they’d get by walking away.

What makes Nash’s solution compelling is that it’s provably fair in ways that feel intuitive. No value is left on the table; you can’t make one side better off without making the other worse off. Only relative preferences matter, not how you measure them. And the benefit of reaching a deal gets divided based on how much each side gains compared to walking away. It doesn’t care who’s louder or more stubborn.

The catch is defining each party’s utility function. Even for everyday negotiations (splitting chores, selling a house, dividing expenses) capturing your true preferences in a formal utility function is beyond what most people can (or want to) do. And without that, the Nash solution remains a mathematical ideal, not a practical tool.

Translating Natural Language into Math

You don’t need people to define their own utility functions. You just need to talk to them. Each party has a conversation with an LLM-powered assistant that builds a picture of what they care about and why.

The question is how to turn that conversation into a utility function. Think about how chess or tennis rankings work: players don’t get scored in isolation, their ratings emerge from head-to-head matches. I take the same approach with agreements. Given what a party has said, the LLM is asked hundreds of one-on-one questions: “would this person prefer agreement A or agreement B?” From those pairwise comparisons, we can infer a utility function (approximate but workable) that ranks any new agreement without anyone having to assign numbers directly.

The same shape helps on the LLM side. Models are unreliable at absolute scoring: asking one to rate a proposal from 1 to 100 produces an answer that drifts with prompt, model version, and phrasing. Asking “given this person’s priorities, would they prefer A or B?” is a narrower, more constrained question. That’s the one we can actually trust at scale, and it’s what the utility estimation is built on. The whole approach rests on that approximation being stable enough to be useful.

Searching for the Best Agreement

Once we can compare any two agreements from each party’s perspective, we need to search for the one that maximizes the Nash product across everyone. Mediator.ai uses a genetic algorithm: start with a pool of candidate agreements, then repeatedly combine and mutate them to breed better ones. Crossover takes two high-scoring agreements and splices their terms together: deadline from one, payment split from another. Mutation tweaks individual terms: adjusting a dollar amount by 10%, shifting a date, swapping a clause. Each candidate gets scored against every party’s utility function, and the Nash product determines which survive to the next generation.

The mutations themselves are driven by small Lua scripts called “mutators,” each representing a different strategy for adjusting terms. Each runs in its own isolated environment. Over time, mutators that consistently produce higher-scoring offspring get selected more often.

You interact with a chat-based assistant that asks questions, helps clarify your priorities, and models your preferences in the background. Each party’s assistant runs in its own private environment, and represents your interests during the optimization process.

That mediator my wife and I hired was good at the empathy part, getting us to articulate what we actually cared about. What was missing was the math: a way to take those preferences and turn them into something principled. That’s the gap Mediator.ai tries to fill.

Limits

Nash’s fairness guarantees only hold if the utility functions are right. Inferring them from a conversation is a useful approximation, not a measurement, and two limitations matter most.

Overstating your walk-away point. The system asks each party what they’d do if no deal were reached, and uses that as the baseline for what counts as a fair improvement. Claiming a better alternative than you actually have can shift the proposed deal in your favor — but it cuts both ways. Overstate too much and the system may conclude that no agreement beats your stated alternative, recommending you walk away when there was a perfectly good deal to be had. In practice, honesty is usually the best policy here: not because the math forces it, but because misrepresenting your alternatives is as likely to cost you a good deal as to win you a better one.

Things you didn’t think to mention. The agreement is only as good as the picture the assistant builds of what you care about. If a factor that actually matters never comes up in the conversation, it won’t show up in the final deal. The assistant works to draw out unstated priorities, and draws on what’s typical for the kind of negotiation you’re in, but it can’t surface something neither party raises. Reading the proposed agreement carefully, and noticing what’s missing, is part of using the tool well.

For anything legally binding, the draft still needs a lawyer to make it enforceable.

Where this is starting

I’m starting with low-stakes domains: roommate agreements, household chores, shared parenting plans. The kinds of negotiations that don’t justify hiring a lawyer but still benefit from structured fairness. A typical session runs about $2 in LLM compute. Longer term: prenups, business partnerships, multiparty deals (though this isn’t a substitute for legal advice, and for anything binding you should still consult an attorney).

Early usage has been a surprise in one direction: most sessions have stayed solo. Users work through their own side — sorting out what they actually want, what they could live with, what would be fair to ask for — and never invite the other party. The Nash math obviously can’t run on one side, so those sessions don’t produce a final agreement. But the structured private interview seems to be useful on its own, and that’s a legitimate way to use the tool.

The interesting technical question isn’t whether Nash bargaining is elegant. It is. The question is whether utility models inferred from ordinary language are stable enough, and useful enough, to make that elegance operational. That’s still open, but it’s testable.

Try it at mediator.ai.